*: equal contributions.

Abstract

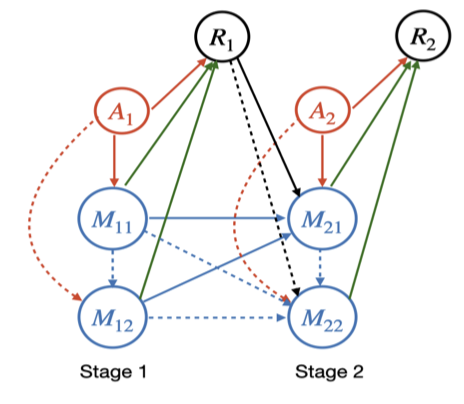

Mediation analysis is an important analytic tool commonly used in a broad range of scientific applications. In this article, we study the problem of mediation analysis when there are multivariate and conditionally dependent mediators, and when the variables are observed over multiple time points. The problem is challenging, because the effect of a mediator involves not only the path from the treatment to this mediator itself at the current time point, but also all possible paths pointed to this mediator from its upstream mediators, as well as the carryover effects from all previous time points. We propose a novel multivariate dynamic mediation analysis approach. Drawing inspiration from the Markov decision process model that is frequently employed in reinforcement learning, we introduce a Markov mediation process paired with a system of time-varying linear structural equation models to formulate the problem. We then formally define the individual mediation effect, built upon the idea of simultaneous interventions and intervention calculus. We next derive the closed-form expression and propose an iterative estimation procedure under the Markov mediation process model. We study both the asymptotic property and the empirical performance of the proposed estimator, and further illustrate our method with a mobile health application.

Keywords

Longitudinal data, Markov process, Mediation analysis, Mobile health, Reinforcement learning