Abstract

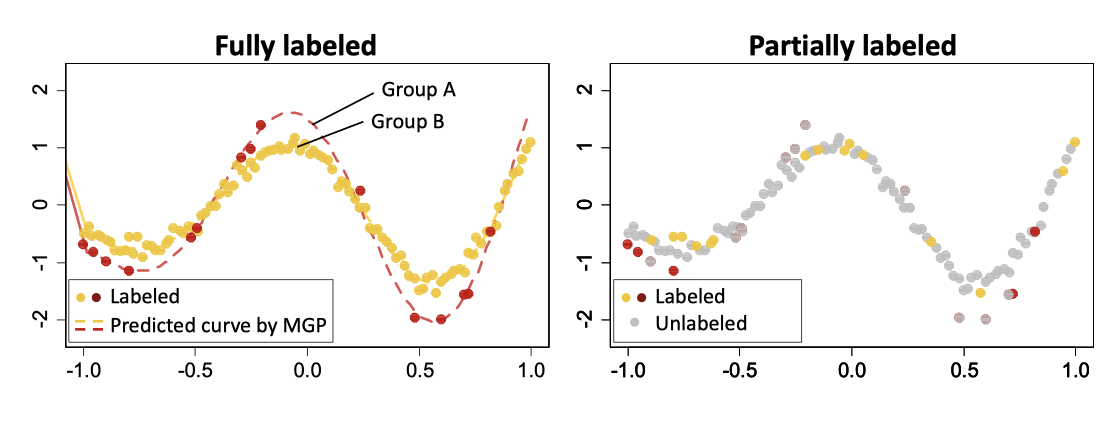

Multi-output regression seeks to borrow strength and leverage commonalities across different but related outputs in order to enhance learning and prediction accuracy. A fundamental assumption is that the output/group membership labels for all observations are known. This assumption is often violated in real applications. For instance, in healthcare datasets, sensitive attributes such as ethnicity are often missing or unreported. To this end, we introduce a weakly-supervised multi-output model based on dependent Gaussian processes. Our approach is able to leverage data without complete group labels or possibly only prior belief on group memberships to enhance accuracy across all outputs. Through intensive simulations and case studies on an Insulin, Testosterone and Bodyfat dataset, we show that our model excels in multi-output settings with missing labels, while being competitive in traditional fully labeled settings. We end by highlighting the possible use of our approach in fair inference and sequential decision-making.

Keywords

Weakly-supervised learning, Multi-output regression, Gaussian process, Real-life applications